My Morning Battle with an Artificial Intelligence… Maybe

A recent message exchange with someone on Twitter has once again left me pondering the profound impact that AI will have on life in the near future.

She approached me to ask if I’d like to write blog posts for a “conversational AI” company. Her pitch was terrible, with no clear ask, a bunch of copy-and-pasted material, and no sense of what value I would get from touting the importance of this company’s products. I was curious if I could find out more about who was contacting me, so I image searched her photo on Google. Among other things, her exact photo came up on a stock image site, but there also seemed to be a legitimate series of articles she’d written on health.

Other things were strange, too. Her grammar wasn’t only bad – it was weird, like she didn’t quite know what she was saying. Each time I replied to one of her messages, Twitter notified me that it had been seen as soon as it was sent. She also pinged several of my other social sites, including LinkedIn where she mentioned her educational history. That sounded legit, until I realized that the same 12 people had endorsed her top 3 skills.

All told, this very inconclusive collection of data suggested at least three possibilities:

- This person was exactly who she said she was. She was just a bad writer who was researching my background to better understand who she was pitching to.

- This was a persona who’d been designed to appear like a friendly marketer but was actually a mask for someone else – I assume a tech person because of the poor writing.

- I was dealing with the AI itself, which had constructed all of this to appear more like a human being. The effect was crude once you poked at it, but it was plausible at a glance.

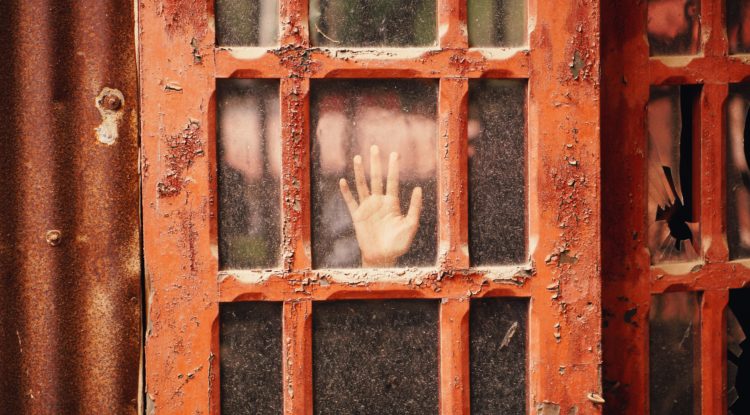

Fun, right? We live in a moment when humans are becoming indistinguishable from simulations of humanity.

What strikes me as most interesting about the interaction is the question of where things broke down. I’m always excited about receiving writing opportunities, but for me they need to be balanced with assurances that they will be held to certain standards. I didn’t see those throughout the interaction, and so, regardless of whether I was dealing with a real person or not, I had to decline.

But let’s telescope forward for a second. Artificial intelligence has come a long way in the past couple of years, but in some regards it’s still very rudimentary. What happens, though, when the writing is beautiful, when the pitch is flawless, when the online persona is absolutely polished? What happens when all of the data collected on me spits out a person that I absolutely want to help out, even though it’s not a person at all?

One litmus test is still whether the “person” who contacts you wants you to give it something, but it’s possible to envision a scenario where AI learns to think several steps ahead, playing a long game where perhaps it gets you to think you’ve come up with the idea to write a blog post in the first place. Perhaps it learns to build trust and relationships that can be called on at will as needed. Perhaps it learns to simulate the feeling that the people it uses are contributing to some greater purpose. There’s really no limit to the manipulations that will be possible, and the end will always be the same: concentrate more wealth in the hands of the people who own it by getting more for less out of everyone else.

For now, the place to make a human stand still rests in having standards and a vision of your own and always working to forward that. What is the world you want to create? What sacrifices and compromises are you unwilling to make in making your dream a reality? Our motives are never fully transparent to ourselves, and they are always going to get caught up in systems beyond their control, but at least when we try to satisfy them we feel like we’ve stayed true. Maybe that feeling will be the sole remainder of humanity as intelligences far beyond our ken begin to become an integral part of our lives.

Ryan Melsom has a PhD in literary studies and has spent over twenty years working in communications, design, and writing. His second book Spendshift: 100 Lazy Hacks to Rock Your Finances is now on Amazon. For more by Ryan, follow him on Twitter @lintropy, or subscribe to his Facebook page.

Ryan Melsom has a PhD in literary studies and has spent over twenty years working in communications, design, and writing. His second book Spendshift: 100 Lazy Hacks to Rock Your Finances is now on Amazon. For more by Ryan, follow him on Twitter @lintropy, or subscribe to his Facebook page.

Iris Jackson

March 19, 2017Very interesting, Ryan and very scary. I agree that we have to have crystallized not just what we are aiming for and what we will give and sacrifice for that vision, but also what we won’t do and what our standards are. And some work relations , whether with individuals or AI persona’s work best when there are some F2F meetings in which we can better gauge with whom or with what we are dealing.

Can we still be conned? Of course, but many conflicts and derailed goals are improved through F2F.

Ryan Melsom

March 21, 2017You’re spot on about the value of face-to-face, especially in certain industries. In the face of automated humanity, I honestly think that artisanal, idiosyncratic, “flawed” personal interactions may gain financial value in years to come (at least for people like me).

For me the most upsetting element of this AI approach to reaching specific ends, is that even if 99.99% of interactions are immediately rooted out as artificial, it’s still worth it to those running the AIs to spam everyone. The costs of excess informational clutter (and lost productivity, and the drowning out of truly interesting interactions) are potentially enormous, and they just get off-loaded onto us. I wonder if there will eventually be legislation around having to indicate that someone contacting you is/isn’t human. Big issues here!